Are you ready to unlock deeper insights from your data using Principal Component Analysis (PCA)? Scikit-Learn’s PCA is a powerful tool for dimensionality reduction that helps you distill complex data into its most informative components, making patterns and relationships easier to identify. However, many users find themselves overwhelmed by the technical details and lack of practical examples. That’s where this guide comes in. This step-by-step, problem-solving guide will help you master Scikit-Learn PCA, ensuring you can confidently apply this technique to your data analysis projects.

Problem-Solution Opening Addressing User Needs

Every data scientist or analyst knows the challenge of dealing with high-dimensional datasets. Whether it’s a dataset with hundreds of features or thousands of observations, understanding and visualizing the data can be a daunting task. The sheer complexity makes it hard to discern the underlying patterns and structures. Enter Principal Component Analysis (PCA), a powerful method to simplify and reveal the essence of your data by reducing its dimensionality without losing critical information. This guide will walk you through the essentials of PCA with Scikit-Learn, offering actionable advice and practical examples to help you gain deeper insights into your data.

Quick Reference

Quick Reference

- Immediate action item with clear benefit: Start with a correlation matrix to visualize relationships between your variables.

- Essential tip with step-by-step guidance: Use Scikit-Learn’s PCA to transform your dataset into principal components, retaining the most variance.

- Common mistake to avoid with solution: Skip the scaling of your data before applying PCA. Always scale your features to ensure equal contribution to the analysis.

Detailed How-To Sections

Understanding PCA

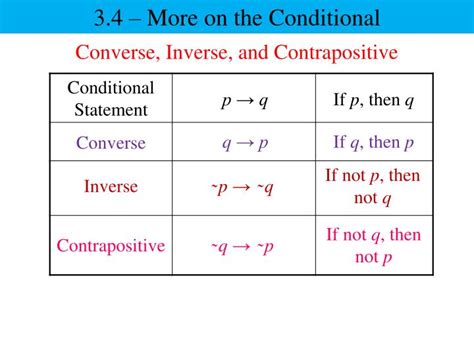

Principal Component Analysis (PCA) is a statistical technique used for dimensionality reduction. The goal is to transform your dataset into a new coordinate system where the greatest variance comes to lie on the first few axes, called principal components. These components allow you to represent your data in a lower-dimensional space while retaining most of the variation present in the original data.

Here’s a simple breakdown of how PCA works:

- Standardize your data to have a mean of zero and unit variance.

- Compute the covariance matrix of the data.

- Calculate the eigenvalues and eigenvectors of the covariance matrix.

- Sort the eigenvectors by decreasing eigenvalues and choose the top k eigenvectors to form a basis for the new feature subspace.

- Project your data onto this new subspace.

Getting Started with Scikit-Learn PCA

Scikit-Learn provides a convenient implementation of PCA, making it easy to apply this technique to your data. Below are the steps to use PCA in your data analysis:

Step 1: Import necessary libraries

First, make sure you have Scikit-Learn and other essential libraries installed:

import numpy as np

import pandas as pd

from sklearn.preprocessing import StandardScaler

from sklearn.decomposition import PCA

import matplotlib.pyplot as pltStep 2: Load and prepare your dataset

For this example, we’ll use the famous Iris dataset:

from sklearn.datasets import load_iris

iris = load_iris()

df = pd.DataFrame(data= np.c_[iris['data'], iris['target']],

columns= iris['feature_names'] + ['target'])Step 3: Standardize your data

Standardizing your data ensures that each feature contributes equally to the analysis:

x = df.drop('target', axis=1)

scaler = StandardScaler()

x_scaled = scaler.fit_transform(x)Step 4: Apply PCA

Now you’re ready to apply PCA to reduce the dimensionality of your data:

pca = PCA(n_components=2)

principal_components = pca.fit_transform(x_scaled)Step 5: Visualize the results

To visualize the transformed data, we can plot it:

plt.figure(figsize=(8, 6))

plt.scatter(principal_components[:, 0], principal_components[:, 1], c=df['target'], edgecolor='k', alpha=0.6)

plt.xlabel('First Principal Component')

plt.ylabel('Second Principal Component')

plt.title('PCA of IRIS dataset')

plt.show()Advanced Techniques

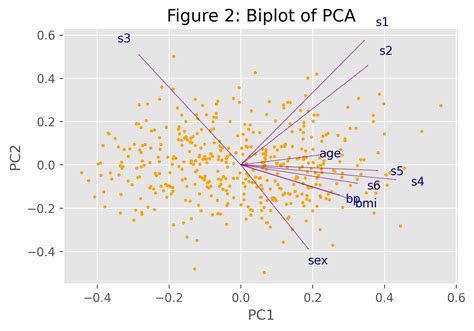

Once you've grasped the basics of PCA, it's time to explore some advanced techniques that will help you squeeze even more value out of your data.

Choosing the Right Number of Components

Determining how many principal components to retain is critical for effective dimensionality reduction. Here’s how to decide:

- Use the explained variance ratio, which tells you how much information (variance) each principal component holds.

- Plot the cumulative explained variance and look for the ‘elbow’ point where the marginal gain in explained variance drops sharply.

Here’s an example:

plt.figure(figsize=(8, 6))

plt.plot(np.cumsum(pca.explained_variance_ratio_))

plt.xlabel('Number of Components')

plt.ylabel('Cumulative Explained Variance')

plt.title('Explained Variance')

plt.show()Handling Non-Gaussian Data

PCA assumes that the principal components are a linear combination of the original features, which works best with Gaussian data. However, if your data is non-Gaussian, you might want to try alternative methods like Kernel PCA or t-Distributed Stochastic Neighbor Embedding (t-SNE).

Best Practices

Follow these best practices to ensure the most effective use of PCA:

- Always scale your data before applying PCA to avoid features with larger scales dominating the analysis.

- Evaluate the performance of your PCA model on unseen data to avoid overfitting.

- Consider the interpretation of each principal component to understand the underlying patterns in your data.

Practical FAQ

How do I choose the number of components to keep?

To choose the number of components, first examine the explained variance ratio which indicates how much information each component captures. You can plot the cumulative explained variance and look for the ‘elbow’ point where the incremental gain diminishes sharply. Alternatively, retain enough components to explain a high percentage of the variance (e.g., 95%).

Can I use PCA for non-linear dimensionality reduction?

Standard PCA is a linear method and may not capture non-linear relationships in your data. For non-linear dimensionality reduction, consider using Kernel PCA or methods like t-SNE (t-Distributed Stochastic Neighbor Embedding), which are specifically designed to handle non-linear relationships.

What is the difference between PCA and t-SNE?

PCA and t-SNE are both techniques for dimensionality reduction but serve different purposes. PCA is a linear method that transforms data into principal components to reduce dimensions while preserving as much variance as possible. t-SNE, on the other hand, is a non-linear technique that excels at visualizing high-dimensional data by mapping it to two or three dimensions while maintaining the local structure of the data.

This guide provided a comprehensive look at how to use PCA with Scikit-Learn, from understanding the basics to applying advanced techniques and best practices. By mastering these concepts, you’ll be well-equipped to tackle complex datasets and extract meaningful insights through dimensionality reduction.